How to Vibe Code like a Google Engineer

Leveraging spec-driven development to code like a pro

We interrupt your regular programming to bring you a technical deep dive!

The one piece of consistent feedback from my followers I’ve been getting for the last year or so is that they miss the days when I would do technical deep dives. Yet, I’ve found myself gravitating towards strategic and industry opinion-based articles since that aligns better with my current role and interests.

But I hear you, and I want to give the people what they want! So instead of me writing half-assed technical pieces, I asked my partner Drew (former Google engineer and certified Tech Nerd) if he would please write some technical deep dives for you all.

So be nice to him or else 👊

~ Love, Carly

Hi, Drew here…

First, a little about myself. I’ve been in the tech industry for nearly 20 years and a curious technologist my entire life.

In my engineering and customer facing roles at Cisco, Docker, and Google I prided myself on keeping up with the latest technologies and trends to better advocate for my customers. I lean technical in my interests and will be complementing Carly’s voice (but I definitely won’t be as funny or as charming as she is - also I let her edit this and didn’t review her edits) as a guest writer for Good at Business.

Let’s get into it.

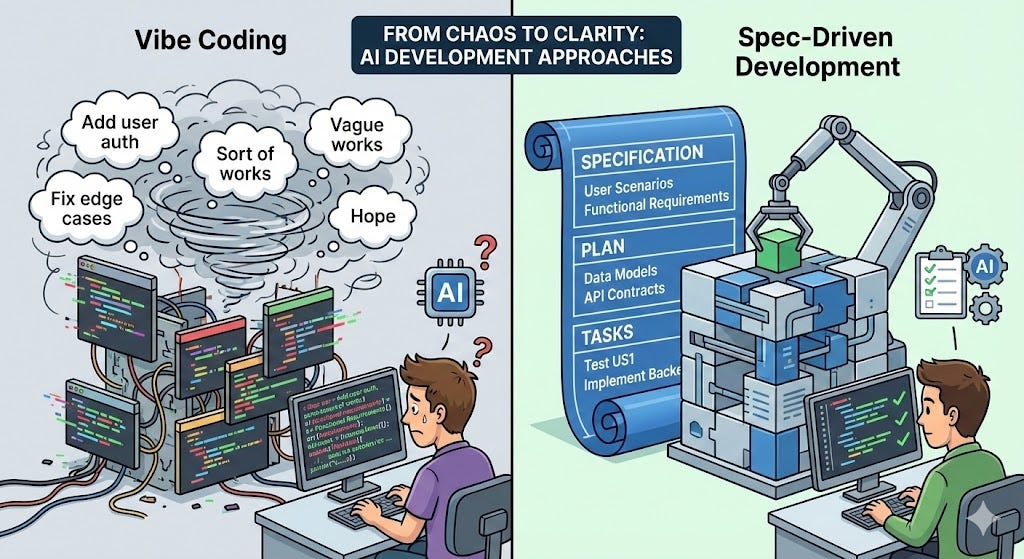

The Problem with “Vibe Coding”

If you’ve used AI coding assistants like GitHub Copilot, Claude Code, or Cursor, you’ve probably experienced this pattern:

Give AI a vague prompt: “Add user authentication”

Get back some code that sort of works

Realize it doesn’t handle edge cases

Prompt again: “Add password reset functionality”

Watch as the AI makes assumptions that conflict with your earlier code

Spend hours debugging inconsistencies

This is what the industry now calls “vibe coding” which, while hilarious and fun, is a chaotic, reactive development process that can’t really handle creating production-level code. That’s because intent is communicated through iterative prompts rather than structured specifications.

And just like an engineer IRL can’t create a functional feature based off of single sentence Slack instructions from their stakeholders (although, who amongst us hasn’t suffered through this at times?), a better approach is to start with detailed product specifications.

In short, vibe coding works for small experiments, but falls apart at production scale.

So, I set out to build an app using a different approach.

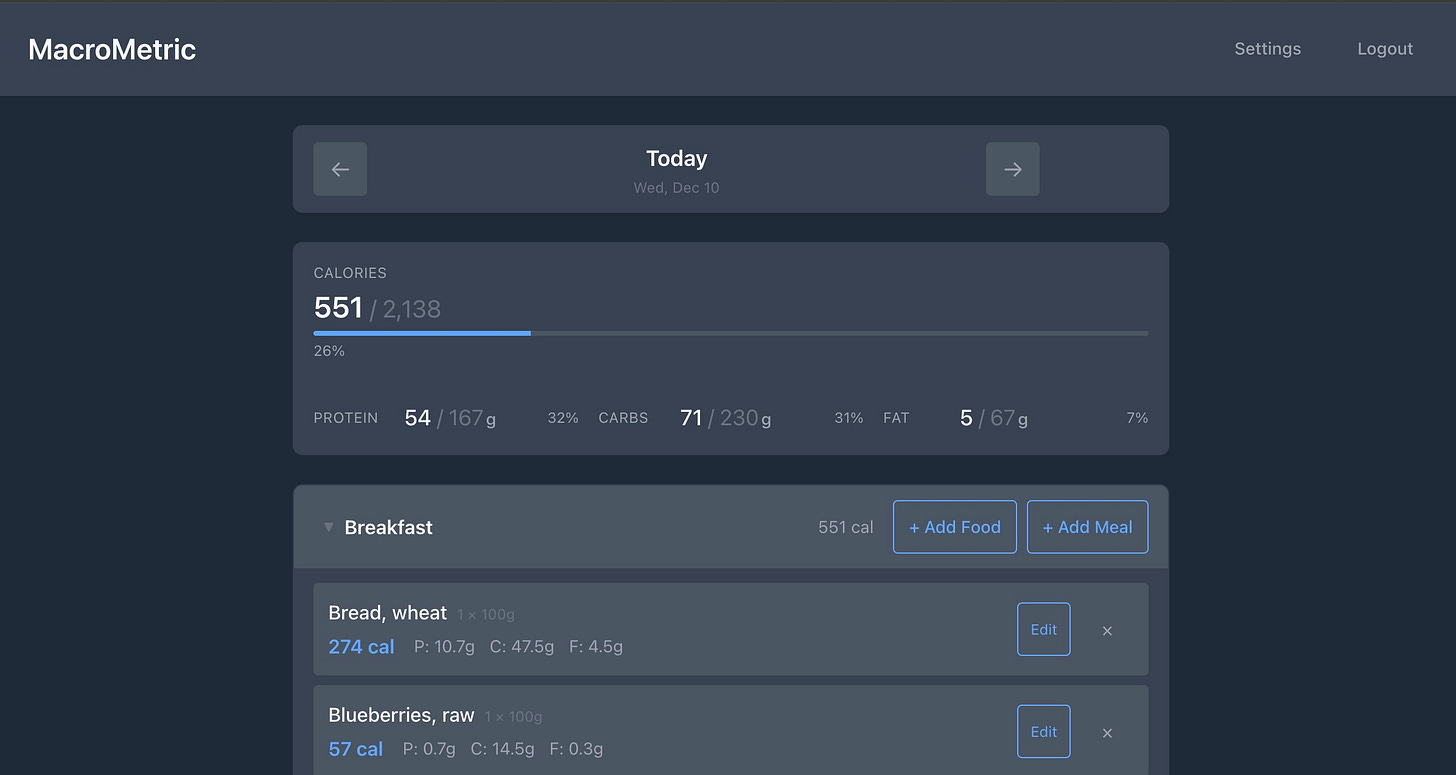

As a lover of all things fitness (and a Certified Hater™ of MyFitnessPal) I decided that I would build a full-stack nutrition tracking app called MacroMetric using an approach that’s been gaining serious traction in 2025: AI spec-driven development.

Instead of iteratively prompting AI coding assistants with vague instructions and hoping for the best, I wrote detailed specifications first, then let AI agents handle the implementation.

The results? 186 completed tasks, 94% test coverage, and a working production app all with dramatically less chaos (maybe if you’re lucky I will tell you about the time Carly and I vibe coded a video game while day drinking.. talk about chaotic) than traditional “vibe coding.”

So here’s what I learned building MacroMetric entirely with this approach, and why you might want to try it for your next project.

Enter Spec-Driven Development

Spec-driven development (SDD) flips the script: write specifications before code. Your spec becomes the single source of truth defining what you’re building and why, without dictating how. AI agents then use these specs to generate consistent, testable implementations.

The core philosophy: “Intent is the source of truth.”

Instead of:

You → Prompt → AI → Code → Bugs → More prompts → Fixes → Hope

You get:

You → Spec → AI → Plan → Tasks → Implementation → Tests (that pass)

Choosing a Framework: The Landscape in 2025

Before starting MacroMetric, I researched the major players in the spec-driven development space:

GitHub Spec Kit

Philosophy: Structured, gated 4-phase workflow (Specify → Plan → Tasks → Implement)

Best for: Greenfield projects (0→1), teams needing strict compliance

Strengths: Deep architecture planning, comprehensive templates, explicit checkpoints

Trade-offs: More verbose specs (~800 lines), steeper learning curve, 8 AI commands

OpenSpec (Fission AI)

Philosophy: Lightweight, change-centric, brownfield-focused

Best for: Evolving existing codebases (1→n), rapid iteration

Strengths: Simple setup (npm install), concise docs (~250 lines), 3 commands

Trade-offs: Less structure for complex greenfield projects, no auto git branching

BMAD-METHOD

Philosophy: Agentic agile with specialized AI personas and role-planning

Best for: Complex domain logic, early architecture debates

Strengths: 55% faster completion in studies, deep role specialization

Trade-offs: Most complex setup, potentially overkill for straightforward apps

My Choice: Spec Kit (with customization)

I chose GitHub Spec Kit because MacroMetric was a greenfield project that needed:

Clear architectural boundaries (React frontend + FastAPI backend)

Consistent patterns across multiple features

Strong testing discipline from day one

A framework that could scale from MVP to production

The selection guidance from my research was clear: “For pioneering greenfield projects with straightforward architecture but strict compliance, spec-kit is safer.”

The MacroMetric Journey: Building with Structure

Constitution as Foundation

Before writing a single line of code, I created a project constitution (specs/.specify/memory/constitution.md)—a living document defining:

Core Principles:

Modern Web Application: React SPA + FastAPI backend

Semantic HTML: Accessibility and SEO first

User-Centric Design: Core workflows in <3 clicks, <500ms API responses

Performance: Initial render <2s, optimize for speed

Simplicity: No unnecessary dependencies

Security & Privacy: bcrypt passwords, 30min access tokens, HTTPS required

Testing: Automated tests for all features (pytest, Jest, Playwright)

Version Control: Conventional commits, atomic changes, feature branches

Technology Stack:

Frontend: React 18 + TypeScript, Vite, Tailwind CSS

Backend: FastAPI (Python 3.11+), SQLAlchemy, PostgreSQL

Testing: pytest, Jest + React Testing Library, Playwright E2E

This constitution became guardrails for the AI. Every feature spec was validated against these principles. When the AI suggested adding a new framework or pattern, the constitution provided clear rejection criteria.

The 4-Phase Workflow

Every feature in MacroMetric followed this rigorous process:

1. Specify (/speckit.specify)

I’d describe a feature in natural language:

/speckit.specify “Create Goals and Custom Foods management pages in the Settings section. These pages should use the previous functionality created for these features. This new specification will allow users to update their Goals and Custom Foods outside of the onboarding flow and diary page.”

The AI would:

Generate a short branch name (

005-settings-goals-foods)Create a feature spec (

specs/005-settings-goals-foods/spec.md)Fill in structured sections:

User Scenarios (Given/When/Then acceptance criteria)

Functional Requirements (FR-001, FR-002, etc.)

Success Criteria (measurable, technology-agnostic)

Edge Cases

Run a quality checklist validation

Real Example from 005-settings-goals-foods/spec.md:

### User Story 1 - Daily Goals Management (Priority: P1)

A user wants to view and edit their daily nutritional goals (calories,

protein, carbs, fat) from the Settings page.

**Why this priority**: Goals are fundamental to the app’s value proposition.

**Independent Test**: Can be fully tested by navigating to Settings > Goals,

entering/modifying goal values, saving, and verifying the diary page shows

updated progress bars.

**Acceptance Scenarios**:

1. **Given** a user navigates to Settings, **When** they click the “Goals”

tab, **Then** they see their current daily goals or a prompt to set goals

2. **Given** a user enters invalid data (negative numbers, non-numeric values),

**When** they try to save, **Then** they see clear error messages

The spec was completely technology-agnostic. No mention of React, FastAPI, or databases.

2. Plan (/speckit.plan)

The AI then created a technical plan (plan.md):

## Technical Context

**Language/Version**: TypeScript 4.9+, React 18; Python 3.11+

**Primary Dependencies**: React, Axios, FastAPI, SQLAlchemy

**Storage**: PostgreSQL (existing tables: `daily_goals`, `custom_foods`)

**Testing**: Jest, React Testing Library, pytest

## Constitution Check ✅

### I. Modern Web Application ✅

**Status**: PASS

**Rationale**: This feature extends the existing React SPA frontend.

No architectural changes required.

### V. Simplicity ✅

**Status**: PASS

**Rationale**: Zero new dependencies. Reuses existing API services,

Tailwind patterns, validation approaches.

Every feature was validated against all 8 constitutional principles before proceeding. This caught architectural drift early.

The plan also included:

Data models (DailyGoal entity with calories, protein, carbs, fat)

API contracts (GET/PUT endpoints with request/response schemas)

Source structure (which files to create/modify)

Performance targets (form submissions <500ms)

3. Tasks (/speckit.tasks)

The AI broke the plan into atomic, testable tasks following strict TDD:

## Phase 1: Goals Management (US1)

### Tests for US1 (Write FIRST - must FAIL)

- [ ] T001 [P] [US1] Write goals API contract tests in backend/tests/contract/test_goals.py

- [ ] T002 [P] [US1] Write GoalInput component tests in frontend/tests/components/GoalInput.test.tsx

### Backend Implementation for US1

- [ ] T003 [US1] Create DailyGoal model in backend/src/models/daily_goal.py

- [ ] T004 [US1] Create migration for DailyGoal table in backend/alembic/versions/

- [ ] T005 [US1] Implement goals API endpoints (GET/PUT /goals) in backend/src/api/goals.py

### Frontend Implementation for US1

- [ ] T006 [US1] Create GoalInput component in frontend/src/components/GoalInput/index.tsx

- [ ] T007 [US1] Update MacroDisplay to show progress toward goals

- [ ] T008 [US1] Run frontend US1 tests - verify PASS

Key patterns:

[P]= Can run in parallel (different files, no dependencies)[US1]= Maps to User Story 1 from the specTDD mandate: Write tests → FAIL → Implement → PASS

For MacroMetric, this generated 186 total tasks across 5 major features.

4. Implement (/speckit.implement)

Finally, the AI executed tasks sequentially:

Write the test (must fail)

Implement the feature

Run tests (must pass)

Mark task complete

Move to next task

Real outcome from the settings-goals-foods feature:

32 passing tests (100% of test tasks)

Optimistic UI updates with rollback on error

Full ARIA labels and accessibility

Form validation preventing all invalid submissions

Practical Takeaways for Your First Spec-Driven Project

1. Start Small and Think Architecturally

Don’t spec your entire app upfront. Build one feature with the workflow to learn the patterns. I started with authentication (foundational but familiar).

And specs made me answer hard questions upfront:

What are the independent user journeys?

Which features can be tested in isolation?

What are the edge cases?

Example: The spec forced me to decide before coding how deleted custom foods should behave in saved meals (answer: retain nutritional snapshot, show “(deleted)” indicator).

2. Invest in Your Constitution

Spend a day writing your project principles, tech stack, and quality standards. This is the most valuable artifact you’ll create.

And remember - vague specs produce vague implementations. I learned this the hard way.

Bad spec (too vague):

FR-001: System MUST allow users to manage goals

Good spec (testable, unambiguous):

FR-003: System MUST allow users to edit and save their daily goals

with numeric input fields

FR-004: System MUST validate goal inputs (numeric values, non-negative)

and display clear error messages for invalid data

The AI can only be as precise as your requirements, so include:

Core architectural constraints

Security requirements

Performance targets

Testing philosophy

And updating your constitution can be costly, like when I added the “Version Control” principle (Principle VIII) to the constitution mid-project, I had to:

Update the constitution with a sync impact report

Verify all existing specs still passed

Update all templates to reference the new principle

Re-validate plan files

Get your constitution right early. Changes propagate everywhere.

3. Use Checklist Validation Ruthlessly

The /speckit.specify command generates a quality checklist. Actually use it:

## Content Quality

- [ ] No implementation details (languages, frameworks, APIs)

- [ ] Focused on user value and business needs

- [ ] All mandatory sections completed

## Requirement Completeness

- [ ] Requirements are testable and unambiguous

- [ ] Success criteria are measurable

- [ ] Edge cases are identified

If items fail, fix the spec before planning. Bad specs compound into bad plans into bad code.

4. Embrace the TDD Discipline

Write tests first. Make them fail. Then implement. This felt slow initially but prevented massive debugging sessions later.

MacroMetric’s test suite:

159/169 backend tests (94%)

Full frontend coverage

E2E tests for authentication + food logging

All written before implementation.

5. Don’t Over-Clarify

Max 3 [NEEDS CLARIFICATION] markers per spec. Make informed guesses and document assumptions instead.

Ask AI for clarification when:

Decision significantly impacts scope

Multiple interpretations with different implications

No reasonable default exists

Don’t ask AI when:

Industry standards exist (use those)

Your constitution already defines it

You can make a reasonable guess

6. Let Templates Guide You

The templates are training wheels. Use them strictly for your first 2-3 features, then customize based on patterns you discover.

I added domain-specific sections after feature 2:

USDA API Integration patterns

Nutritional Data Validation rules

Macro Display standards

The Future: AI Needs Adult Supervision

Here’s my honest take: Spec-driven development is adult supervision for AI coding.

AI agents are incredible at implementation but terrible at product direction. They need:

Clear intent (specs)

Architectural boundaries (constitution)

Quality gates (checklists)

Structured feedback (test-driven development)

Without these, you get vibe coding, which, as we’ve already established, is fast but chaotic.

With spec-driven development, you get:

95%+ accuracy on first implementation

Consistent patterns across features

Living documentation that stays current

Test coverage that actually exists

Is it slower initially? Yes. My first spec took 2 hours.

Is it faster overall? Absolutely. Feature 5 went from idea to 32 passing tests in one session. No debugging and no annoying “let me fix that” prompts.

Try It Yourself

MacroMetric is open source and fully documented with all specs, plans, and tasks visible in the specs/ directory. See the code here:

001-macro-calorie-tracker- Initial MVP (186 tasks)003-ui-ux-tailwind- Complete design system migration005-settings-goals-foods- Settings pages with full CRUD

Each feature includes:

spec.md - Technology-agnostic requirements

plan.md - Technical design with constitution checks

tasks.md - Atomic TDD tasks

Implementation - The actual working code

Clone it. Read the specs. See how intent translates to implementation.

The tools are free (Spec Kit, OpenSpec are both MIT-licensed). The learning curve is real. But if you’re building production software with AI assistants in 2025, structured beats chaotic every time.

Questions? Hit me up in the comments or on LinkedIn. I’m still learning, but happy to share what worked (and what didn’t) in this wild new world of spec-driven AI development.

And as always, share if this resonated with you and recommend Good at Business to your friends, family, and enemies.

✌️ Drew

Want More? Here are some other rants.

Upcoming Events

Game Developers Conference - March 9-13, San Francisco

Thank You! If you want to support this newsletter:

Want to book 1:1 time with me? Check out my calendar here.

Want me to speak at your event? Please use the form at the bottom of this page to reach out to me.

If you’d like to sponsor my newsletter, please use the form at the bottom of this page to reach out to me.

Love this. I've been saying "better specs" for AI-generated projects for at least a year. The curious thing is that I wonder how much more productive my human developers would have been had I been as committed to giving them clearer/better directions over this same period. :-)

This is exactly what I needed exactly when I needed it. Phenomenal post Carly!